Understanding ETL and ELT Workflows in Data Engineering: An Easy Guide with Examples

Learn how to process your data with ETL and ELT workflows, and make informed data-driven decisions.

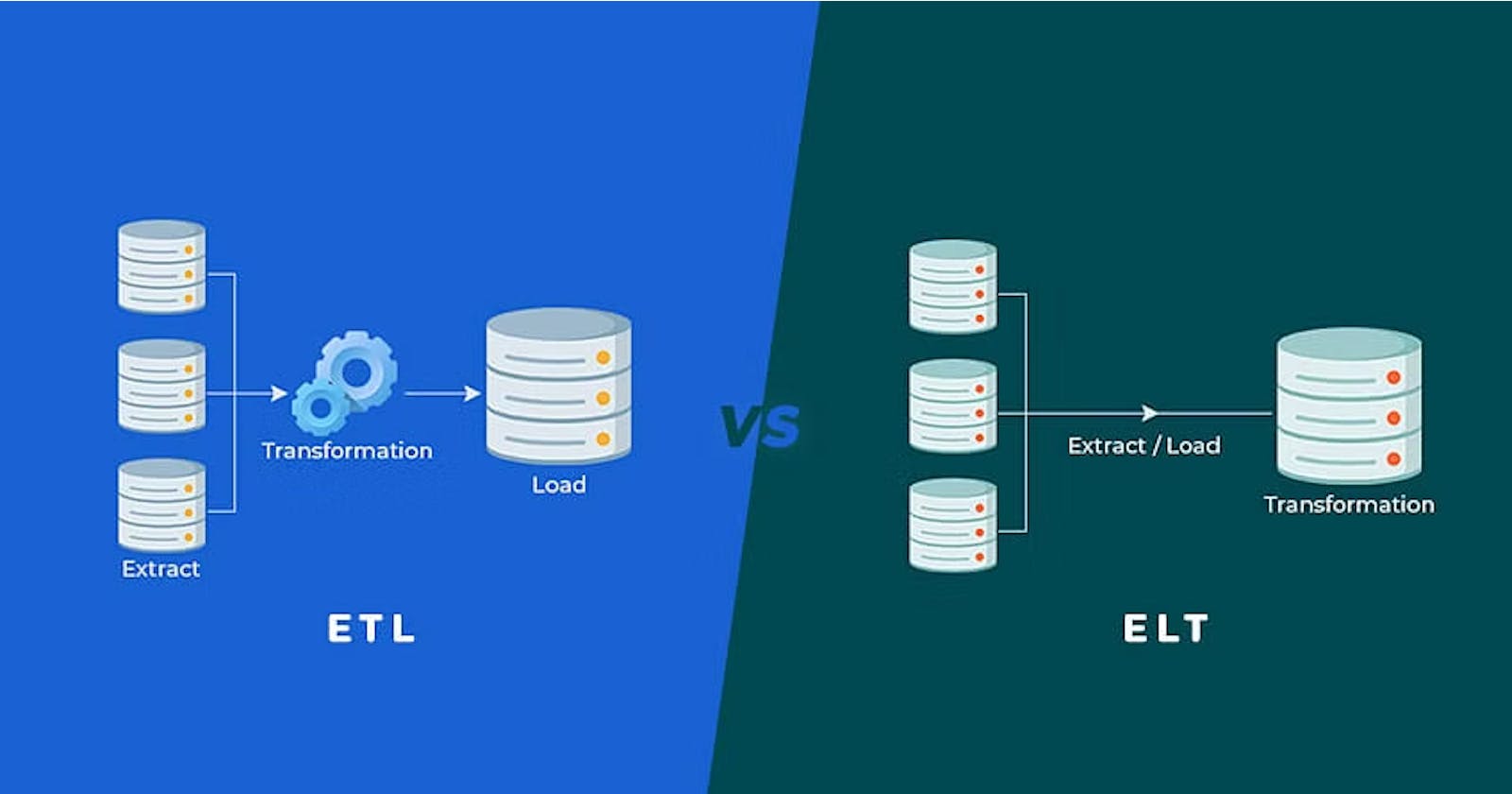

Data engineering is a complex field where many different technologies, frameworks, and techniques come into play. Two of the most common data processing workflows data engineers use are ETL and ELT. ETL stands for Extract, Transform, and Load, while ELT stands for Extract, Load, and Transform. In this article, we will delve into the workings of these workflows and provide examples to help you understand them better.

Introduction to ETL and ELT

Before we dive into the differences between ETL and ELT workflows, let's first understand their overall concepts. ETL and ELT are approaches used for processing data from one or more sources and delivering it to a target location where data can be further analyzed. This target location could be a data warehouse, a data lake, a business intelligence system, or any other system that requires clean, structured data for decision-making.

The basic steps involved in ETL and ELT workflows are as follows:

Extract: Data is extracted from one or more source systems.

Transform: The extracted data is cleaned, enriched, and transformed according to the requirements of the target system.

Load: The transformed data is loaded into the target system.

The key difference between ETL and ELT lies in the order in which the transform step occurs.

ETL Workflow

In an ETL workflow, the transform step is performed after the data is extracted and before it is loaded into the target system. The main benefits of this approach are that it helps to standardize data and make it consistent across all source systems. It also simplifies the data loading by ensuring only valid data is loaded into the target system. ETL workflows typically involve heavy use of data transformation tools such as ETL frameworks (e.g., Apache NiFi, Talend) and data integration platforms.

In this example, data is extracted from three different sources: a CRM system, a web analytics platform, and a social media platform. The data is then transformed using an ETL tool and loaded into a data warehouse for further analysis.

ELT Workflow

An ELT workflow performs the transform step after extracting and loading the data into the target system. This approach provides more flexibility because it enables data analysts to use the data as it is in the target system and perform transformations using SQL queries or analytics tools. ELT workflows are often used in Big Data environments where data volumes are too large to be processed by a traditional ETL workflow.

In this example, data is extracted from various sources and loaded directly into a Hadoop Distributed File System (HDFS). Data transformations are then performed using Hive, a data warehousing software that allows analysts to query and analyze large datasets stored in HDFS.

Here's an example of ETL & ELT workflows:

Which Workflow to Choose?

ETL and ELT workflows are useful, and the choice of workflow depends on the specific requirements of the target system. For example, an ETL approach might be more appropriate if you're working with structured data that needs to be standardized and cleansed before loading. On the other hand, if you're working with unstructured data that needs to be analyzed quickly, an ELT approach might be the better option.

Conclusion

Data processing workflows can be complicated but can be made more manageable with the right knowledge. In this article, we have delved into the concepts of ETL and ELT workflows and provided examples to help you understand them better. The choice of workflow depends on the specific requirements of the target system, and data engineers need to weigh the pros and cons of each approach before making a decision.